ThiefWatcher, a homemade indoor surveillance system II

In this second article in the series on the ThiefWatcher homemade video surveillance system, I will explain the different protocols that the application uses to interact with its different components, which can be replaced by different new ones allowing a large number of combinations. There is a protocol to communicate with the camera, another to trigger the system, another to alerting the user remotely, and, finally, a protocol to exchange photographs and messages to manage the server from client devices.

To start the series from the beginning, in this link you have the first article of the ThiewWatcher surveillance system series.

In this link you can download the source code of the ThiefWatcher solution, written in CSharp using Visual Studio 2015.

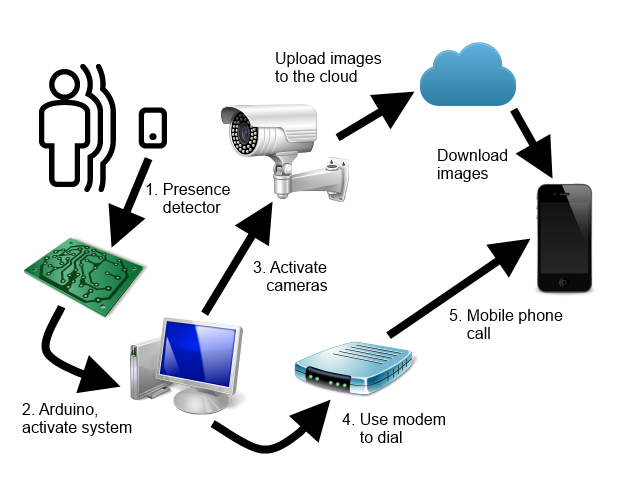

I will follow the order of the general scheme of the application to explain the different protocols:

All of them are defined in the WatcherCommons class library, in the Interfaces namespace. In the application, the registered protocols are listed in the configuration file, in the protocolsSection section:

<protocolsSection>

<protocols>

<protocolData name="Arduino Simple Trigger"

class="trigger"

type="ArduinoSimpleTriggerProtocol.ArduinoTrigger,

ArduinoSimpleTriggerProtocol, Version=1.0.0.0,

Culture=neutral, PublicKeyToken=null" />

<protocolData name="Lync Notifications"

class="alarm"

type="LyncProtocol.LyncAlarmChannel,

LyncProtocol, Version=1.0.0.0,

Culture=neutral, PublicKeyToken=null" />

<protocolData name="AT Modem Notifications"

class="alarm"

type="ATModemProtocol.ATModemAlarmChannel,

ATModemProtocol, Version=1.0.0.0,

Culture=neutral, PublicKeyToken=null" />

<protocolData name="Azure Blob Storage"

class="storage"

type="AzureBlobProtocol.AzureBlobManager,

AzureBlobProtocol, Version=1.0.0.0,

Culture=neutral, PublicKeyToken=null" />

<protocolData name="NetWave IP camera"

class="camera"

type="NetWaveProtocol.NetWaveCamera,

NetWaveProtocol, Version=1.0.0.0,

Culture=neutral, PublicKeyToken=null" />

<protocolData name="VAPIX IP Camera"

class="camera"

type="VAPIXProtocol.VAPIXCamera,

VAPIXProtocol, Version=1.0.0.0,

Culture=neutral, PublicKeyToken=null" />

<protocolData name="DropBox Storage"

class="storage"

type="DropBoxProtocol.DropBoxStorage,

DropBoxProtocol, Version=1.0.0.0,

Culture=neutral, PublicKeyToken=null" />

</protocols>

</protocolsSection>

From the program, you can install new protocols with the File / Install protocol/s... menu option. One class library may contain several protocols. I have preferred to separate each protocol in a different library for clarity. The usage of the protocol is specified with the class attribute, which can take the values trigger, alarm, camera or storage.

Trigger protocol

In my case, the system begins to operate in surveillance mode when a sensor detects the presence of an intruder, activating a relay which in turn activates an Arduino board, which transmits a signal through the serial port so that the system background process fires the active surveillance mode.

The presence detector is a simple switch that closes when it detects movement near of it. Normally, these switches operate with 220V current, since they are designed to activate regular electrical devices, such as lighting systems, so that it cannot activate the Arduino board directly.

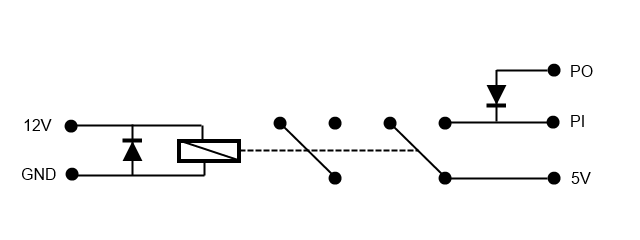

Instead, and to completely isolate the computer from the activation power source, I will use a relay to close a circuit between two pins of the Arduino board, that of the 5V power supply and any input pin. The relay coil operates on 12V supply, so I also need a power supply that transforms the 220V AC into 12V DC.

The diagram of the relay mounting is as follows:

To the left is the input from the power supply, to the right the connections with the Arduino board. PO can be connected to the ground pin or to any output pin in LOW state (depending on the connector you use, I used an output pin because the ground pin is far from the 5V on my board). PI is the input pin, which is connected to the 5V when the relay closes the circuit.

This is the program I have used for the Arduino board:

int pin1 = 28;

int pin0 = 24;

void setup() {

// Initialize pins

pinMode(pin0, OUTPUT);

digitalWrite(pin0, LOW);

pinMode(pin1, INPUT);

digitalWrite(pin1, LOW);

Serial.begin(9600);

}

void loop() {

int val = digitalRead(pin1);

if (val == HIGH) {

Serial.write(1);

}

delay(1000);

}

As for the protocol, it is implemented through the ITrigger interface, defined as follows:

public interface ITrigger

{

event EventHandler OnTriggerFired;

string ConnectionString { get; set; }

void Initialize();

void Start();

void Stop();

}

- OnTriggerFired: is the event by means which the protocol notifies the program. The protocol is executed in its own separate thread, so you must take this into account when controlling this event.

- ConnectionString: is a text string with the configuration parameters.

- Initialize: this method is called to perform initialization tasks before the protocol is started.

- Start: method to put the protocol in listening mode.

- Stop: method to stop listening.

The implementation of this protocol is found in the ArduinoSimpleTriggerProtocol class library. The configuration string has two parameters, port specifies the serial port to communicate with the Arduino board, and baudrate is used to specify the port transfer speed. For example:

port=COM4;baudrate=9600

Camera control protocol

Once the system is activated, the cameras that we have connected will be turned on. The interface that is used to control them is IWatcherCamera, defined as follows:

public class FrameEventArgs : EventArgs

{

public FrameEventArgs(Bitmap bmp)

{

Image = bmp;

}

public Bitmap Image { get; private set; }

}

public delegate void NewFrameEventHandler(object sender, FrameEventArgs e);

public interface IWatcherCamera

{

event NewFrameEventHandler OnNewFrame;

Size FrameSize { get; }

string ConnectionString { get; set; }

string UserName { get; set; }

string Password { get; set; }

string Uri { get; set; }

int MaxFPS { get; set; }

bool Status { get; }

ICameraSetupManager SetupManager { get; }

void Initialize();

void ShowCameraConfiguration(Form parent);

void Start();

void Close();

}

The FrameEventArgs class is used with the event fired when a new image is ready, to pass that image to the application in the form of a Bitmap object. The interface members are as follows:

- OnNewFrame: is the event to subscribe in order to be notified every time a new image of the camera is ready. Keep in mind that the camera also uses its own separated thread to read the camera images, so you will have to take this into account in the controller of this event.

- FrameSize: Contains the dimensions of the images as configured in the camera.

- ConnectionString: text string with the parameters to configure the camera.

- UserName: user name to access the camera.

- Password: password to access the camera.

- Uri: the camera address.

- MaxFPS: Maximum number of frames per second to be read.

- Status: true when the camera is working.

- SetupManager: allows access to the camera's configuration system, to subscribe events that notify when certain parameters are changed.

- Initialize: used to perform camera initialization tasks.

- ShowCameraConfiguration: displays a dialog box to configure the camera.

- Start: puts the camera on.

- Stop: puts the camera off.

I have implemented two different camera protocols; the NetWaveProtocol project is for the NetWave protocol, which is based on the linked article.

The other protocol is in the VAPIXProtocol project, and implements the VAPIX protocol, also based on the previous linked article.

The two protocols use the same connection string, with three different parameters, url, userName and password, as in this example:

url=http://192.168.1.20;userName=admin;password=123456

The ICameraSetupManager interface is very simple:

public interface ICameraSetupManager

{

event EventHandler OnFrameSizeChanged;

void ReloadSettings();

}

It consists only of an event to be notified when you resize the camera image (basically I use it to resize the camera windows), and the ReloadSettings method, used to force the reading of the values of the parameters stored in the camera.

The camera configuration is stored in the connectionStrings section of the ThiefWatcher application configuration file, and in the custom section camerasSection:

<camerasSection>

<cameras>

<cameraData id="CAMNW"

protocolName="NetWave IP camera"

connectionStringName="CAMNW" />

<cameraData id="VAPIX"

protocolName="VAPIX IP Camera"

connectionStringName="VAPIX" />

</cameras>

</camerasSection>

Alarm protocol

Through this protocol we are informed of any eventualities that the system can detect. The interface that should implement this type of protocol is IAlaramChannel, defined as follows:

public interface IAlarmChannel

{

string ConnectionString { get; set; }

string MessageText { get; set; }

void Initialice();

void SendAlarm();

}

- ConnectionString: as in the other protocols, a text string with the configuration parameters.

- MessageText: is the text of a message that the protocol can send along with the warning, if the channel allows it.

- Initialize: to perform the initialization tasks of the protocol.

- SendAlarm: executes the alarm itself. This operation occurs synchronously.

The protocol I have selected for my own assembly is implemented in the project ATModemProtocol and simply makes a call to one or more phone numbers using an AT modem connected to a serial port on the computer. The connection string has the following parameters:

- port: Identifies the port to which the modem is connected.

- baudrate: baud rate of the port.

- initdelay: Delay in milliseconds before sending an AT command.

- number: comma separated list of phone numbers to which call.

- ringduration: duration in milliseconds of the call before hanging.

An example of a connection string is as follows:

port=COM3;baudrate=9600;initdelay=2000;number=XXXXXXXXX;ringduration=20000

I have also implemented another example of this protocol that uses the Skype or Lync client to perform the alert, in the LyncProtocol project. To use this protocol you must have the Lync client installed on both the server and the client side, and the connection string is simply a list of Skype / Lync addresses separated by the semicolon character.

Storage protocol

The last protocol is the one used to transmit the images and the control messages between the server and the clients. The images are sent in jpg format, and the messages in JSON format. There are two data structures for message exchange, defined in the Data namespace of the WatcherCommons project. The first one is used to send commands from the clients to the server:

[DataContract]

public class ControlCommand

{

public const int cmdGetCameraList = 1;

public const int cmdStopAlarm = 2;

public ControlCommand()

{

}

public static ControlCommand FromJSON(Stream s)

{

s.Position = 0;

StreamReader rdr = new StreamReader(s);

string str = rdr.ReadToEnd();

return JsonConvert.DeserializeObject<ControlCommand>(str);

}

public static void ToJSON(Stream s, ControlCommand cc)

{

s.Position = 0;

string js = JsonConvert.SerializeObject(cc);

StreamWriter wr = new StreamWriter(s);

wr.Write(js);

wr.Flush();

}

[DataMember]

public int Command { get; set; }

[DataMember]

public string ClientID { get; set; }

}

The ControlCommand class represents two different commands, depending on the value of the Command property. cmdGetCameraList (1) is used to get a list of cameras, and cmdStopAlarm (2) is used to stop the surveillance state and return to the standby mode.

The ClientID property specifies a unique identifier to register the client with the application.

The other data type is CameraInfo, defined as follows:

[DataContract]

public class CameraInfo

{

public CameraInfo()

{

}

public static List<CameraInfo> FromJSON(Stream s)

{

s.Position = 0;

StreamReader rdr = new StreamReader(s);

return JsonConvert.DeserializeObject<List<CameraInfo>>(rdr.ReadToEnd());

}

public static void ToJSON(Stream s, List<CameraInfo> ci)

{

s.Position = 0;

string js = JsonConvert.SerializeObject(ci);

StreamWriter wr = new StreamWriter(s);

wr.Write(js);

wr.Flush();

}

[DataMember]

public string ID { get; set; }

[DataMember]

public bool Active { get; set; }

[DataMember]

public bool Photo { get; set; }

[DataMember]

public int Width { get; set; }

[DataMember]

public int Height { get; set; }

[DataMember]

public string ClientID { get; set; }

}

With this data structure the application responds to the commands, indicating the list of cameras and their state. It is also used to send requests to the cameras, to activate or deactivate them, or to take photographs. The properties have the following usage:

- ID: is the unique identifier of the camera.

- Active: Indicates whether the camera is active (true).

- Photo: when it is a request from a client, it is used to indicate that the camera must take a picture.

- Width and Height: Indicates the dimensions of the camera images.

- ClientID: used to identify the client making the request.

The protocol is implemented using the IStorageManager interface, defined as follows:

public interface IStorageManager

{

string ConnsecionString { get; set; }

string ContainerPath { get; set; }

void UploadFile(string filename, Stream s);

void DownloadFile(string filename, Stream s);

void DeleteFile(string filename);

bool ExistsFile(string filename);

IEnumerable<string> ListFiles(string model);

IEnumerable<ControlCommand> GetCommands();

IEnumerable<List<CameraInfo>> GetRequests();

void SendResponse(List<CameraInfo> resp);

}

- ConnectionString: text string with configuration parameters.

- ContainerPath: used to identify the file container. It can be a folder path, the name of a blob container, etc.

- UploadFile: to upload a file to the storage medium. The file is in the s Stream, and is stored with the name passed in the filename parameter.

- DownloadFile: to download a file named filename from the storage location to the s Stream.

- DeleteFile: Deletes the file with the specified name.

- ExistsFile: Checks if a file exists with the specified name.

- ListFiles: returns a list of file names that match the pattern indicated in the model parameter.

- GetCommands: gets the commands sent by clients.

- GetRequests: gets the requests sent by the clients to the cameras.

- SendResponse: sends a response to a command or request from a client.

I have implemented this protocol in the AzureBlobProtocol project, using Azure blob storage. The connection string is the usual connection string used with this type of storage, and the ContainerPathn is the name of the blob container.

But the protocol I use is simpler. It is implemented in the DropBoxProtocol project, and uses Dropbox to exchange the files. On the server side, you do not need a connection string, and the ContainerPath indicates the Dropbox folder path that will be used to perform the file exchange.

The protocol works as follows:

A client connects to the system by sending a cmdGetCameraList command, in JSON text format, in a file named cmd_<client ID>.json. The system registers this client ID, deletes the file and responds with a list of cameras in JSON format in a file named resp_<client ID>.json. The client cannot write another command, nor the server another response, until the previous command or response has been processed and deleted.

Once a client is registered, the camera begins to saving jpg files with the current image. There can only be one of these files per camera and client, with the name <Camera ID>_FRAME_<Client ID>.jpg. Each client takes the file that corresponds to it, processes it, and deletes it so that the server can write the next one.

If the client wants to take a picture or activate or deactivate a camera, it sends a file in JSON format with the name req_<client ID>.json, containing a CameraInfo structure to indicate the request. When the server processes it, deletes it and writes a file named resp_<customer ID>.json with the new state of the camera to inform the client.

The photos are saved under the name <Camera ID>_PHOTO_yyyyMMddHHmmss.jpg.

And that's all regarding to protocols. In the following article I will explain the client App.